Hello,

i use hailort 4.20.

I have succesfully converted a custom trained yolov8s model to hef, and received very similar results to the onnx model.

next i trained a custom ocr model.

and i try to optimize it to hef in a similar way.

I used hailomz optimize…

modified the .alls, .yaml and post process files

I saw a dramatic drop in performance for the .hef version of ocr.

I tried to run noise analysis in order to perhaps find the spot that needs fixing, but it fails due to some hailo implementation issue. even though the model gets converted correctly. it says there is channel mismatch when i run the noise analysis.

so i look for an alternative way to examine the model structure, in order to perhaps find a certain layer that gets quantized poorly.

the method i chose is:

- first converting only the first layer,

- quantizing it

- comparing output to onnx’s first layer

- then add another layer and do the same, until i see that there is too much mismatch between the output tensors.

then i will know the problemetic layer.

i implemented everything… but when i did 3. (compared only the first quantized layer’s output) i saw very big mismatch between it and the onnx file. (i ran a validation set through it, and compared the tensors).

is my aproach good? am i missing somthing? i would apreciate any suggestions.

Hi @user804,

Your layer by layer bisection approach is valid, but the large mismatch at the very first layer strongly suggests a preprocessing/normalization discrepancy between your ONNX inference pipeline and the Hailo quantized graph rather than a quantization issue. It’s worth verifying that the input fed to both paths is byte-identical - same normalization, value range, and channel order — and that the .alls normalization_params reflect what your OCR model actually expects, since these can differ significantly from what worked for YOLOv8. Comparing dequantized Hailo outputs against ONNX outputs using cosine similarity (rather than absolute error) will also give a more meaningful picture. Once that first layer lines up, the bisection approach should work well for narrowing down any quantization-sensitive layers further along the graph.

Thanks,

Thank you Michael!

Indeed i saw that for the first layers the cosine similarity is 0.9999, also its value reduces the more layers i add to the model.

I have a few follow-up questions:

- Why is the cosine similarity a good metric for evaluation? is it due to it being invariant to the amplitude?

- Is there another way you can suggest i evaluate ?

- When i do find the problematic layer/layers, how do you suggest i deal with it? (I was thinking somehow redefining the quantization parameters for those layers via the .alls file)

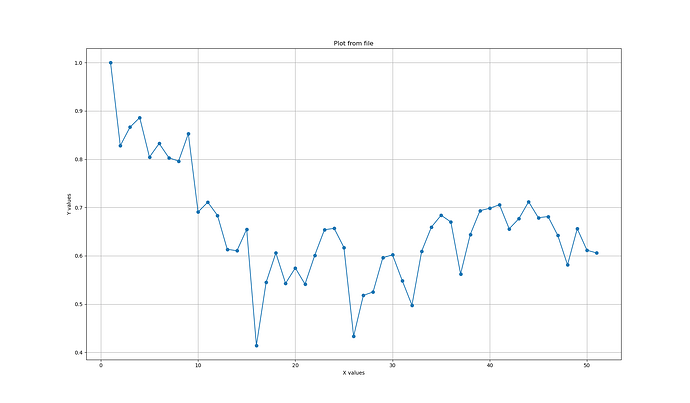

These are the results i got from the check:

The X axis is the amount of layers

The Y axis is the average cosine similarity between onnx and hef over the 2000 validation images

I was thinking that the issue is within first and tenth layers, because after them the similarity has a dramatic fall. would apreciate your insights.

Thanks in advance.:

i just noticed taht the drops in x = 2, x= 10, x=16 and so on is always in batchnorm2D..

Hi @user804,

BatchNorm2D is a well-known quantization-sensitive layer.

1. Why cosine similarity - Yes, it’s scale-invariant. Quantization changes absolute magnitudes, so raw diff looks bad even when the output is functionally correct. Cosine measures directional agreement - what matters.

2. Other metrics - SNR (dB) per layer, and most importantly, task-level OCR accuracy (compare character/word accuracy between ONNX and HEF directly).

3. Fixing the BN layers -

- Better calibration data - use 500+ representative images.

- Selective higher precision in

.alls:

quantization_param([conv_before_bn_layer_name], precision_mode=a16_w16)

- Target the Conv+BN blocks at your drop points (layers ~2, 10, 16).

- QAT (Quantization-Aware Training) - if the above isn’t enough, retrain with quantization-aware finetuning so the model learns to handle the BN folding noise.