Hi,

I need a little bit of help, I’m trying to do inference with the object_detection example on a video from rtmp stream and output it to another rtmp stream.

I called the script with ‘–use-frame‘ option and the generated pipeline looks like:

rtmpsrc location=rtmp://input.server/live/livestream ! flvdemux ! queue ! decodebin ! queue ! videoconvert ! queue ! videoscale ! video/x-raw,format=RGB,width=640,height=640 ! queue ! synchailonet hef-path=/opt/resources/yolov8s.hef ! queue ! hailofilter so-path=/opt/resources/libyolo_hailortpp_postprocess.so function-name=yolov8s !queue ! hailooverlay ! queue ! videoconvert ! videoscale ! video/x-raw,width=1080,height=720 ! queue!x264enc tune=zerolatency bitrate=35000 speed-preset=superfast ! flvmux streamable=true name=mux ! rtmpsink location=“rtmp://output.server/live/livestream”

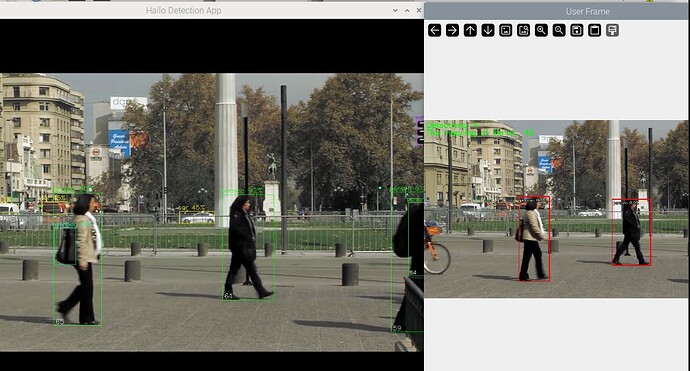

The problem is that in the output stream I’m getting the “normal” output with all the detected bounding boxes, meanwhile in the screen I’m able to see the video I wanted to send to the rtmp output server with only the ‘person’ detection from the example ‘callback’ function in the object detection example

Meanwhile the output of the script looks like:

Frame procesado: 130

Frame procesado: 131

Frame procesado: 132

Frame procesado: 133

Detección: ID: 0 Label: person Confianza: 0.25

BBOX: [0, 2, 637, 336]

Frame procesado: 134

Frame procesado: 135

Detección: ID: 0 Label: person Confianza: 0.56

BBOX: [2, 4, 633, 380]

Frame procesado: 136

Detección: ID: 0 Label: person Confianza: 0.62

BBOX: [0, 11, 618, 384]

Frame procesado: 137

Detección: ID: 0 Label: person Confianza: 0.69

BBOX: [0, 21, 575, 379]

Frame procesado: 138

Detección: ID: 0 Label: person Confianza: 0.67

I mean, when you run the detection.py example using –use-frame argument, suddently 2 windows are opened one with all the inferences done by the hailo and other with the inference and boxes I need and with the boxes and labels I choose in the callback function

I investigated the difference between the two pipelines created and when ‘–use-frame’ argument is used there is two lines added to the midde of the pipeline:

queue name=inference_hailofilter_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 !

hailofilter name=inference_hailofilter so-path=/usr/local/hailo/resources/so/libyolo_hailortpp_postprocess.so function-name=filter_letterbox qos=false !

But I’m unable to output that same second window to the rtmp server, when I use the ‘end’ pipeline I always get the ‘full’ inferenced video, not the second one I’m able to see in the screen….the ‘end’ pipeline I’m running is simply changing the last fpsdisplaysik by rtmpsink:

filesrc location=“/usr/local/hailo/resources/videos/example.mp4” name=source ! queue name=source_queue_decode leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! decodebin name=source_decodebin ! queue name=source_scale_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! videoscale name=source_videoscale n-threads=2 ! queue name=source_convert_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! videoconvert n-threads=3 name=source_convert qos=false ! video/x-raw, pixel-aspect-ratio=1/1, format=RGB, width=1280, height=720 ! videorate name=source_videorate ! capsfilter name=source_fps_caps caps=“video/x-raw, framerate=30/1” ! queue name=inference_wrapper_input_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! hailocropper name=inference_wrapper_crop so-path=/usr/lib/aarch64-linux-gnu/hailo/tappas/post_processes/cropping_algorithms/libwhole_buffer.so function-name=create_crops use-letterbox=true resize-method=inter-area internal-offset=true hailoaggregator name=inference_wrapper_agg inference_wrapper_crop. ! queue name=inference_wrapper_bypass_q leaky=no max-size-buffers=20 max-size-bytes=0 max-size-time=0 ! inference_wrapper_agg.sink_0 inference_wrapper_crop. ! queue name=inference_scale_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! videoscale name=inference_videoscale n-threads=2 qos=false ! queue name=inference_convert_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! video/x-raw, pixel-aspect-ratio=1/1 ! videoconvert name=inference_videoconvert n-threads=2 ! queue name=inference_hailonet_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! hailonet name=inference_hailonet hef-path=/resources/yolov8n.hef batch-size=2 vdevice-group-id=1 nms-score-threshold=0.3 nms-iou-threshold=0.45 output-format-type=HAILO_FORMAT_TYPE_FLOAT32 force-writable=true ! queue name=inference_hailofilter_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! hailofilter name=inference_hailofilter so-path=/usr/local/hailo/resources/so/libyolo_hailortpp_postprocess.so function-name=filter_letterbox qos=false ! queue name=inference_output_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! inference_wrapper_agg.sink_1 inference_wrapper_agg. ! queue name=inference_wrapper_output_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! hailotracker name=hailo_tracker class-id=1 kalman-dist-thr=0.8 iou-thr=0.9 init-iou-thr=0.7 keep-new-frames=2 keep-tracked-frames=15 keep-lost-frames=2 keep-past-metadata=False qos=False ! queue name=hailo_tracker_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! queue name=identity_callback_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! identity name=identity_callback ! queue name=hailo_display_overlay_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! hailooverlay name=hailo_display_overlay ! queue name=hailo_display_videoconvert_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! videoconvert name=hailo_display_videoconvert n-threads=2 qos=false ! queue name=hailo_display_q leaky=no max-size-buffers=3 max-size-bytes=0 max-size-time=0 ! videoconvert ! videoscale ! video/x-raw,width=1080,height=720 ! x264enc tune=zerolatency bitrate=35000 speed-preset=superfast ! flvmux streamable=true name=mux ! rtmpsink location=“rtmp://output.server/live/livestream”

I mean, I need to send to the rtmp the ‘use-frame’ window, does anyone know how?¿