- Model: NanoTrack (Siamese tracker, MobileNetV3 backbone, BAN head)

- Head type:

DepthwiseBAN(ban_v2.py) - Checkpoint:

nanotrackv2.pth(48-channel version) - Config:

BAN.TYPE = DepthwiseBAN(configv2.yaml) - Exported with: PyTorch 1.13.1 → ONNX opset 14

- Target HW: Hailo-8L

- SDK Versions:

-

Hailo DFC 3.29.0

-

HailoRT 4.19.0

I’m trying to run the NanoTrack head (DepthwiseBAN, MobileNetV3 backbone) on Hailo-8L.

-

ONNX export works fine.

-

Hailo parser runs successfully and produces a

.har. -

The problem appears during optimization/quantization with calibration data.

Errors I see:

-

"float32 is not a valid CalibrationDataType" -

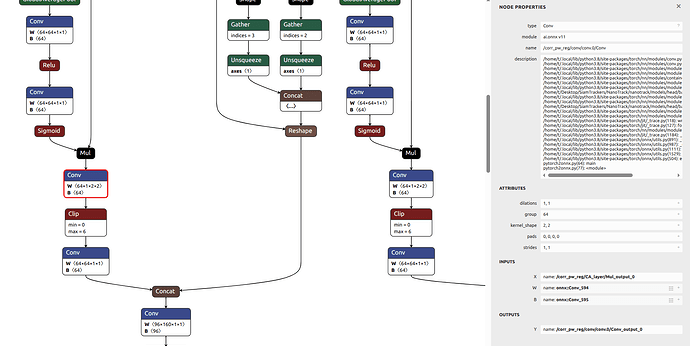

integer division or modulo by zeroinside Conv3DOp, log snippet:kernel shape: (1, 2, 2, 64), input feature: 16, groups: 64 kernel shape 3: 64 Exception encountered when calling layer 'conv_op' (type Conv3DOp). integer division or modulo by zero

It looks like some depthwise conv layers in the BAN head are being mapped to Conv3DOp and break during quantization.

Questions:

-

Is this a known limitation or bug in Hailo SDK with grouped/depthwise conv?

-

How should I fix the

CalibrationDataTypeissue (is a different format expected)? -

Is there a recommended way to restructure these layers so the head becomes Hailo-compatible?

Thanks for any guidance!

-

-