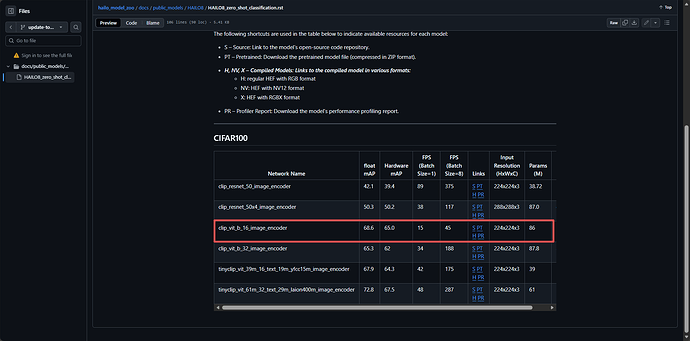

I encountered an issue when using the clip_vit_b_16_image_encoder public model from hailo_model_zoo (version 2.17). The model library link is: hailo_model_zoo/docs/public_models/HAILO8/HAILO8_zero_shot_classification.rst at update-to-version-2.17 · hailo-ai/hailo_model_zoo · GitHub .

I fed the same preprocessed data into both the ONNX model and HEF model included in the library, but the output vectors of the two models are inconsistent. Could anyone help with this? I would like to know how to configure or adjust the operations to make the output vector of the HEF model consistent with that of the ONNX model.

The following is the running code

import onnxruntime as ort

import numpy as np

from PIL import Image

from torchvision import transforms

from torchvision.transforms import functional as TF

from hailo_platform import HEF, VDevice, InputVStreamParams, OutputVStreamParams, FormatType, HailoStreamInterface, InferVStreams, ConfigureParams,HailoSchedulingAlgorithm

normalize = transforms.Normalize(mean=[0.48145466, 0.4578275, 0.40821073],

std=[0.26862954, 0.26130258, 0.27577711])

def onnx_infer(onnx_path,img_array):

# 创建ONNX Runtime会话

session = ort.InferenceSession(onnx_path, providers=["CPUExecutionProvider"])

input_name = session.get_inputs()[0].name

output_name = session.get_outputs()[0].name

onnx_output = session.run([output_name], {input_name: img_array})[0]

return onnx_output.squeeze()

def hef_infer(hef_path,img_array):

first_hef2 = HEF(hef_path)

hefs = [first_hef2]

# Creating the VDevice target with scheduler enabled

params = VDevice.create_params()

params.scheduling_algorithm = HailoSchedulingAlgorithm.ROUND_ROBIN

params.multi_process_service = True

params.group_id = "SHARED"

target = VDevice(params)

configure_params0 = ConfigureParams.create_from_hef(hefs[0], interface=HailoStreamInterface.PCIe)

model_name0 = hefs[0].get_network_group_names()[0]

batch_size = 1

configure_params0[model_name0].batch_size = batch_size

network_groups0 = target.configure(hefs[0], configure_params0)

network_group0 = network_groups0[0]

input_vstreams_params0 = InputVStreamParams.make(network_group0, quantized=False,format_type=FormatType.FLOAT32)

output_vstreams_params0 = OutputVStreamParams.make(network_group0, quantized=True,format_type=FormatType.FLOAT32)

input_vstream_info0 = hefs[0].get_input_vstream_infos()[0]

output_vstream_info0 = hefs[0].get_output_vstream_infos()[0]

input = input_vstream_info0.name

input_dict = {input: img_array}

with InferVStreams(network_group0, input_vstreams_params0, output_vstreams_params0, tf_nms_format = True) as infer_pipeline0:

output_data = infer_pipeline0.infer(input_dict)

tt = output_data[output_vstream_info0.name].squeeze()

return tt

def read_img(img_path):

img = Image.open(img_path).convert('RGB')

img = TF.resize(img, 224, transforms.InterpolationMode.LANCZOS)

img = TF.center_crop(img, (224,224))

img = TF.to_tensor(img).to('cpu')

img = normalize(img)

img_np = img.numpy()

img_array = np.expand_dims(img_np, axis=0)

return img_array

img_array=read_img('./mountain-477832_1280.jpg')

onnx=onnx_infer('./clip_vit_b_16.onnx',img_array)

hef=hef_infer('./clip_vit_b_16_image_encoder.hef',img_array)

cos_sim = np.dot(onnx, hef) / (

np.linalg.norm(onnx) * np.linalg.norm(hef)

)

l2_dist = np.linalg.norm(onnx - hef)

print(f"cos_sim:{cos_sim},l2_dist:{l2_dist}")

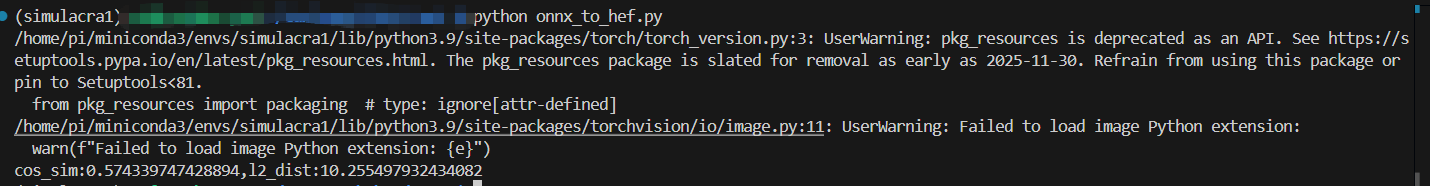

The following is the running result