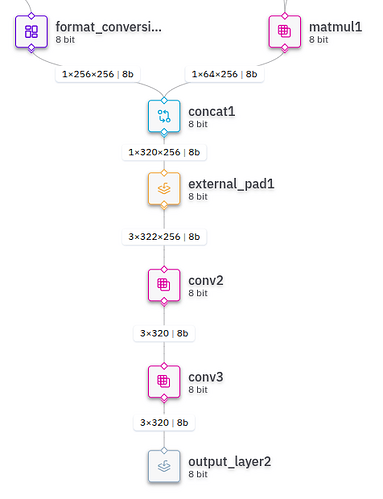

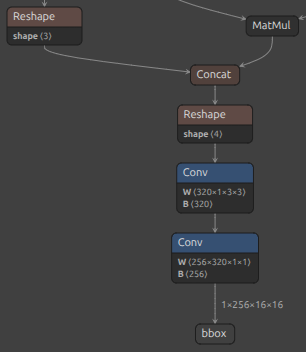

I am trying to parse a part of a siamese object tracking model from ONNX to HAR. Very early in the graph after reshapes the parser ‘‘forgets’’ the spatial metadata of data tensors. Because of that, the parser simply ignores reshape operations from the onnx graph, applies spatial operations incorrectly and so on. Please find attached parts of the ONNX and HAR graphs in question. One can see that in HAR after the concatenation the reshape node is ignored, and afterwards the external_pad node before the depthwise convolution pads wrong dimensions, resulting in tensor becoming 3x322x256 and producing some 3x320 nonsense data afterfards, instead of splitting it and then padding to 1x320x18x18

I tried summing the tensor with a zero matrix or performing an identity convolution after the concatenation node to force the parser to infer the intended shape. However, in every case the model failed to be parsed because of shape mismatch. The parser omits the reshape node and then breaks because of invalid shapes it gets as a result.

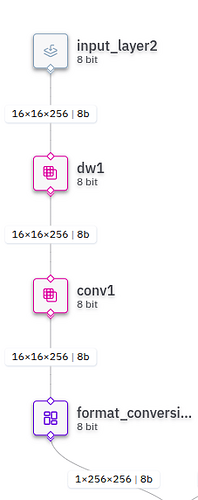

The same problem appears really anywhere in the graph after the input data is reshaped once. One can see on a screenshot I attached that at the beggining of the graph the parser correctly identifies the same depthwise convolution and properly parses it.

The third image I attached also shows that after the first reshape (which was parsed as ‘‘format_conversion”, the data tensor gets an extra 1x dimension at the start, while HAR graphs usually do not have them. I interpret this as a sign of the parser losing the spatial metadata.

What could be done to resolve this and correctly pass the model? So far I feel like I tried everything I could on PyTorch / ONNX side. Nothing I did with the model source code affected this, the problem still persists.

Thank you for your help!